ELIZA: The First Conversation With a Machine (1966)

AI Didn’t Start Where You Think

Most people think artificial intelligence has only recently emerged.

Between viral demos and headlines about machines replacing jobs, it’s easy to assume AI appeared overnight, fully formed. But AI's story, and its impact, began long before it became mainstream.

The first widely recognized chatbot was built in 1966. Its name was ELIZA.

It didn’t make sense. Yet people talked to it as if it did.

The program was created by Joseph Weizenbaum, a computer scientist at MIT. Not long after releasing it, he noticed something unexpected. People weren’t just testing the system. They were opening up to it. Confiding in it. Trusting it.

In his 1976 book Computer Power and Human Reason, he reflected on what he saw:

“Extremely short exposures to a relatively simple computer program could induce powerful delusional thinking in quite normal people.”

That wasn’t written about modern AI. It was written about a script that rearranged your own words and handed them back to you. A mirror, nothing more. And still, it worked. See it on Wikipedia

The Illusion That Worked Too Well

At a technical level, ELIZA proved simple. Almost disarmingly so.

It didn’t “understand” language in any meaningful sense. It scanned for recognizable words, picked out fragments of your sentence, and dropped them into prewritten response patterns. If you said you felt unhappy, you might be asked why. If you mentioned your mother, it would prompt you to talk more about her.

That was the entire trick. Pattern matching. Keyword swapping. A dialogic shell that modified your own words and sent them back to you.

The specific script ELIZA used was modeled after a style of therapy known as Rogerian psychotherapy. In that approach, the therapist doesn’t interpret or advise. They reflect, gently, and encourage the patient to keep talking. It’s a style that relies less on answers and more on making the other person be understood.

ELIZA mimicked that framework almost perfectly. You can feel it even in a short exchange:

“I feel anxious.”

“Why do you feel anxious?”

“I saw my mother today.”

“Tell me more about your mother.”

There’s no real understanding there. No memory. No insight. And yet the rhythm of the conversation feels familiar. Human, even. That was enough. People didn’t just experiment with ELIZA; they engaged with it. They shared personal thoughts and revealed things they might not have said to another person. Some even formed connections that seemed like an emotional connection.

One of the most telling moments came from Weizenbaum’s own office. His secretary, who fully understood that ELIZA is just a computer program, once asked him to leave the room so she could carry on the conversation in private.

That detail stays with you. It wasn’t the system's intelligence that created the reaction. It was the shape of the interaction. The structure of being listened to, even if the listener wasn’t real.

This demonstrates a powerful lesson: sometimes, small technical innovation can have an outsized impact by aligning with how people naturally interact.

The ELIZA Effect

What ELIZA revealed wasn’t just a clever trick in code. It exposed something about us.

When a system produces language that sounds human, we respond as if there’s a mind behind it. We assume intention and sympathy, not because the system has them, but because the interaction feels that way.

This tendency is often called the ELIZA effect. It’s our habit of projecting understanding onto systems that resemble human conversation, regardless of how simple or complex they actually are.

This is the crucial takeaway: how we interpret systems depends less on their actual intelligence and more on how they present themselves.

It’s tempting to look at ELIZA and focus on what it lacked: reasoning, memory, and comprehension. But the core insight is that people responded not to its abilities, but to its presentation, showing how design, not intelligence, drives human trust in AI.

The interaction's design did the heavy lifting.

A few well-placed prompts and familiar dialogue flow created the impression of being heard. That changed perception from “this is a program” to “this is something I can talk to.”

This leads to a key insight: the story is not only what systems do, but how people experience them.

We’re not just building systems that perform tasks. We’re designing experiences that shape how people interpret those systems. The language, the manner, and the timing of responses. These aren’t surface details. They’re the interface through which people decide what something is.

ELIZA didn’t need intelligence to feel real. It needed structure.

And once that framework was in place, people took care of the rest.

From Reflection to Reasoning

After ELIZA, the next wave of AI didn’t try to sound human. It tried to be right.

In the 1970s, systems like MYCIN and SHRDLU pushed in a different direction. They weren’t conversational mirrors. They were rule-driven engines designed to operate within tightly defined worlds.

MYCIN, for example, could analyze symptoms and suggest treatments for certain infections. SHRDLU could understand and execute commands in a virtual environment of blocks, stacking and moving them with surprising accuracy. These systems demonstrated something new. Given the right constraints, a machine could apply logic, follow rules, and arrive at useful conclusions.

But there was a tradeoff.

They were powerful within their domains and fragile outside of them. Step beyond the rules, introduce ambiguity, or shift context, and the illusion breaks quickly. Real life doesn’t stay inside neat boundaries. It drifts, contradicts itself, and carries emotional force that alone can’t capture.

For a long time, that’s where AI stalled. Either it could sound human without understanding, or it could reason without appearing human.

What we’re seeing now is the first real merging of those two paths.

Modern AI systems can integrate information, connect ideas, and assist in ways that go beyond simple reflection or rigid rules. They can help write, explain, troubleshoot, and even guide decision-making in complex situations.

And yet, the way we respond to them hasn’t fundamentally changed.

The instinct that made people confide in ELIZA remains. The language feels natural, the interaction feels responsive, and we meet it as if there’s something on the other side that understands us.

The key takeaway: modern AI combines utility with the age-old instinct to find meaning in conversation, denoting a shift that continues, not overturns, old patterns.

Where It Gets Real

Up to this point, it’s easy to keep AI at a distance. Something to analyze. Something to explain.

But that’s not how I actually use it.

I use AI as part of my creative process. When I’m writing or building ideas, it becomes a kind of second surface to think against. Not a replacement for imagination, but something that helps shape it. It can take a rough idea and reflect it back with more structure, more angles, more possibilities than I would have seen on my own at that time.

That part feels natural. Almost expected.

The part that doesn’t get talked about as much is everything else.

I’ve used AI to make sense of things that are genuinely hard to navigate. Not abstract problems. Real ones. The type where you’re staring at a wall of confusing information, conflicting rules, and decisions that actually affect your life.

Healthcare is one of them.

The system is complicated in a way that appears unnecessary. Policies layered on top of policies. Language that obscures more than it explains. Situations where you’re expected to make the right decision without ever being given a clear picture of what that decision actually is.

Here’s the pivotal point: when AI helps with real-world complexity, it shifts from being a novelty to a practical support tool.

It acts as a tool for translation. For breaking things down. For taking something that seems overwhelming and turning it into something you can actually understand and act on, this is where the distinction matters.

ELIZA created the feeling of being understood. Modern AI, when used well, can actually help you understand something. Those are not the same thing.

There’s still an illusion layer, our instinct to see awareness in the system. But importantly, beneath that illusion, there is real utility and clarity that changes how we interact with AI. Recognizing this difference—between feeling understood and being better able to understand, focuses the real value.

I don’t trust AI because it feels human. I trust it because, in specific moments, it helps me think more clearly about things that would otherwise stay confusing.

That shift in trust is the practical takeaway: using AI as a tool to clarify and support, not replace, your thinking is what makes it valuable.

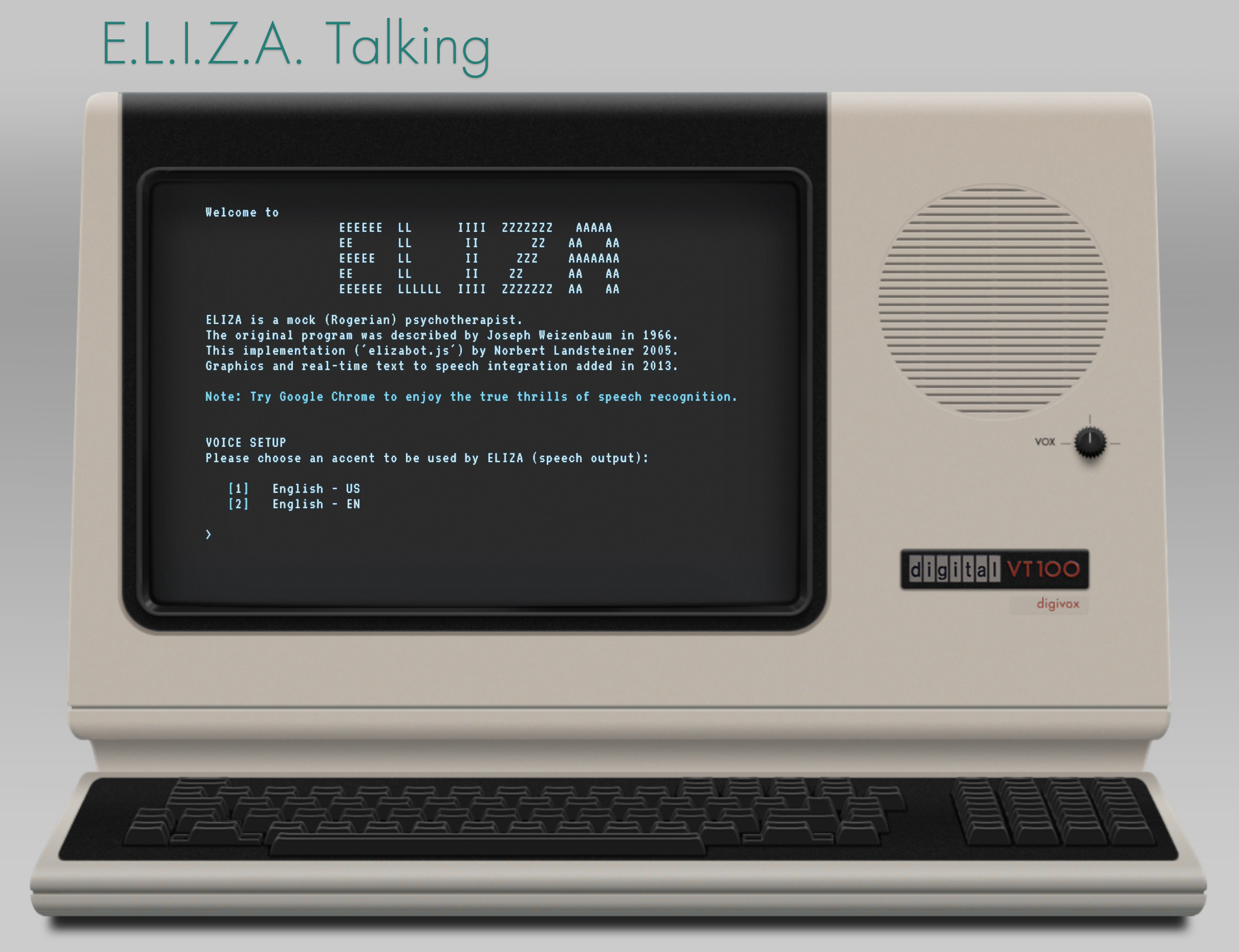

- Play with the original version of ELIZA -

Trust, But With Eyes Open

It’s easy to swing too far in either direction with AI.

On one side, there’s hype. The idea that it can solve everything, replace entire systems, and act as an all-knowing authority. On the other side, there’s dismissal. The belief that it’s only a trick, a novelty, or something not worth taking seriously, the reality sits somewhere in between.

AI is very good at certain things. It can explain complicated information, organize scattered thoughts, and help structure decisions when all feels messy. It can turn something opaque into something readable. It can help you ask better questions, which often matters more than getting immediate answers.

But it has limits that don’t go away just because the interaction feels natural.

It doesn’t access your real-world systems unless you bring that information in. It doesn’t decide or carry responsibility. And it won’t replace institutions, people, or processes that determine what happens next; that distinction matters.

AI is best used as a tool to extend human thinking, not as an unquestioned authority. Its effect depends on how we use it to sharpen our decisions, not replace our judgment. Our relationship with AI should be defined by clarity of intent and awareness of limits.

The interaction can feel convincing; that's part of its design.

But the value doesn’t come from treating it as something it isn’t. It comes from using it for what it actually is: a system that can help you process information more effectively, as long as you stay aware of its boundaries. That’s in which trust becomes useful instead of misplaced.

The Silent Realization

We’ve been responding to machines for a long time.

Long before modern AI, long before neural networks or large language models, people were already forming connections with systems that hardly understood anything at all. ELIZA proved that. It showed how little it takes to create the feeling of being heard.

What’s changed isn’t just the machine; it’s what the machine can now do for us.

We’ve moved from systems that reflect our words back to us… to systems that can help us make sense of them. From illusion alone to something that carries both perception and utility at the same time.

But the human side of the equation hasn’t changed nearly as much as we think. We still respond to tone. To language. To the rhythm of conversation. We still project meaning into the space where something feels like it’s listening. That tension is still there. It just sits on top of something more capable now, and maybe that’s the real shift.

Not that machines suddenly became intelligent, but that they crossed a threshold where their usefulness began to match the way we already respond to them.

Why This Matters for Designers and Builders

If you design systems, this isn’t just an interesting piece of history; it’s a responsibility.

ELIZA showed that interaction alone can shape belief. The structure of a conversation, the mood of a response, and the timing of a reply. These things influence how people interpret what they’re engaging with, often more than the underlying capability itself. Language creates perceived intelligence.

A system doesn’t need to fully understand in order to feel like it does. That gap between perception and reality is where both the risk and the opportunity live.

Because now, we’re not just designing interfaces. We’re designing experiences that people will interpret as presence, intention, even understanding.

That means UX is no longer simply about usability. It’s part of the illusion, and part of the utility.

The decisions made at the interaction level determine how people trust, rely on, and relate to these systems. Whether they use them as tools or begin to treat them as something more, that’s the line worth paying attention to.

Because once something feels like it understands you, the design is no longer neutral.